A team from Art-A-Hack™ Summer 2016

Team members:

- Eva Lee

- Aaron Trocola

- Gabriel Ibagon

- Gal Nissim

- Pat Shiu

The Dual Brains team created a real-time visual performance driven by the brain data of two performers. The team were inspired by recent neuroscientific research indicating human brains are fundamentally hard wired for empathy.

The goal was to present a creative view of human neural interaction, taking into account the power of empathy. The performers focused their minds on emotionally charged memories, first without physical contact, and then while holding hands.

Spectators saw the change in neural states visualized through projections. They also heard the effects on the performers’ bodies, as sounds were created from brain and heart activity — both EEG and ECG data.

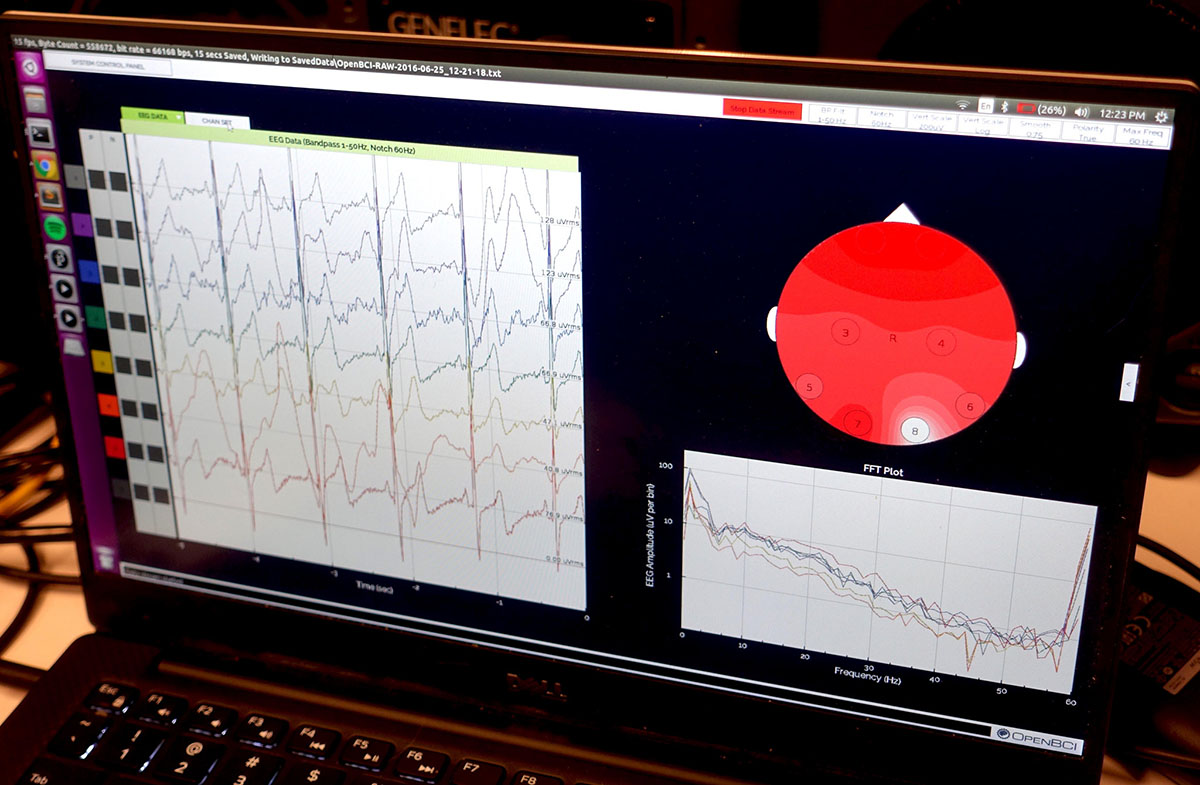

The team created their prototype using OpenBCI, an open source brain-computer interfacing toolkit. Data from the OpenBCI headsets was translated and passed to a real-time graphics interface, created using the Processing creative coding environment.

Any project with this many variables will face challenges. Early on, the team wrestled with hardware issues and designer Aaron Trocola created multiple versions of custom built headsets before finalizing the optimal placement of brain sensors.

At the same time, programmer Gabriel Ibagon focused on the real-time translation of brain wave data. This data was passed to Processing where Pat Shiu and Gal Nissim worked to dynamically draw the visuals. The result of this real time syncing ensured the visuals matched the emotional sub-context behind all the data.

A work-in-progress prototype was developed and demonstrated for visitors at the final Art-A-Hack showcase in July. The team later recorded another performance in the same space, at Thoughtworks New York.

The team plans to continue developing the project, further exploring concepts about neuro-social interaction. Their goal moving forward is making the project accessible to gallerists and curators for display. More information is available on team lead Eva Lee’s blog.